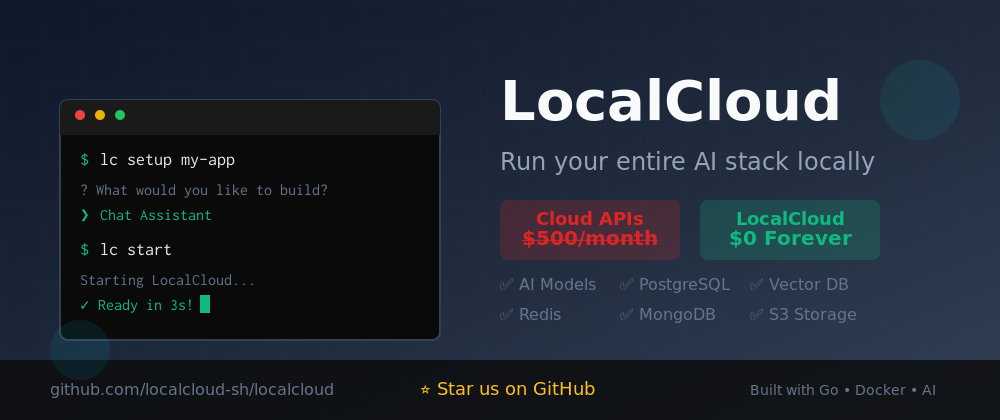

LocalCloud revolutionizes AI development by bringing your entire cloud stack to your local machine. No more $500/month API bills or rate limits during development.

What you get:

Multiple LLMs (Llama, Qwen, Mistral) via Ollama

PostgreSQL with pgvector for RAG applications

MongoDB for flexible data storage

Redis for caching and queues

S3-compatible object storage (MinIO)

All configured and ready with one command

Built for modern AI development - works seamlessly with Claude Code, Cursor, and Gemini CLI. Perfect for MVPs, POCs, hackathons, and privacy-first enterprises.

Setup in 30 seconds: lc setup && lc start

Open source, Docker-based, runs on 4GB RAM.

Comments

Publisher

M

Melih Gürgah

Launch Date2025-07-23

Platformdesktop

Pricingfree

Tech Stack

#LLM#Developer Tools#AI Development#AI Ops#Open Models

Sponsors

Aura++

All in one launch platform to launch your project and grow your online presence effortlessly.

Product Hunt Email Scraper

Scraper Email for Product Hunt Daily leaderboard and peerlist, tinylaunch, uneed.

ShipThing

The scalable and production-ready Next.js SaaS starter kit.

Become a Sponsor

Get your brand featured here